Computer vision for accessibility and inclusion

This project explored Indian Sign Language (ISL) recognition using convolutional neural networks, aiming to support accessibility through computer vision.

Beyond technical performance, the project emphasized inclusive AI, showing how machine learning can be applied to socially meaningful problems.

Object detection is a computer vision task used to detect instances of semantic objects (of a given class) in digital images and videos. Unlike classification-only approaches, object detection returns both a class label and a bounding box for each detected instance.

YOLO is a state-of-the-art, real-time object detection system. It treats detection as a single regression problem, directly from image pixels to bounding box coordinates and class probabilities, making it computationally efficient compared to multi-stage detectors.

Traditional hand-crafted features often fail to generalize across all signs, especially as the symbol set grows. To address this, object detection (particularly Tiny YOLO) was introduced for ISL recognition to:

Common detection models include R-CNN, Faster R-CNN, and SSD. While these are effective, they typically require more computation or multi-stage pipelines. Tiny YOLO provides a strong balance of speed and accuracy for this use case.

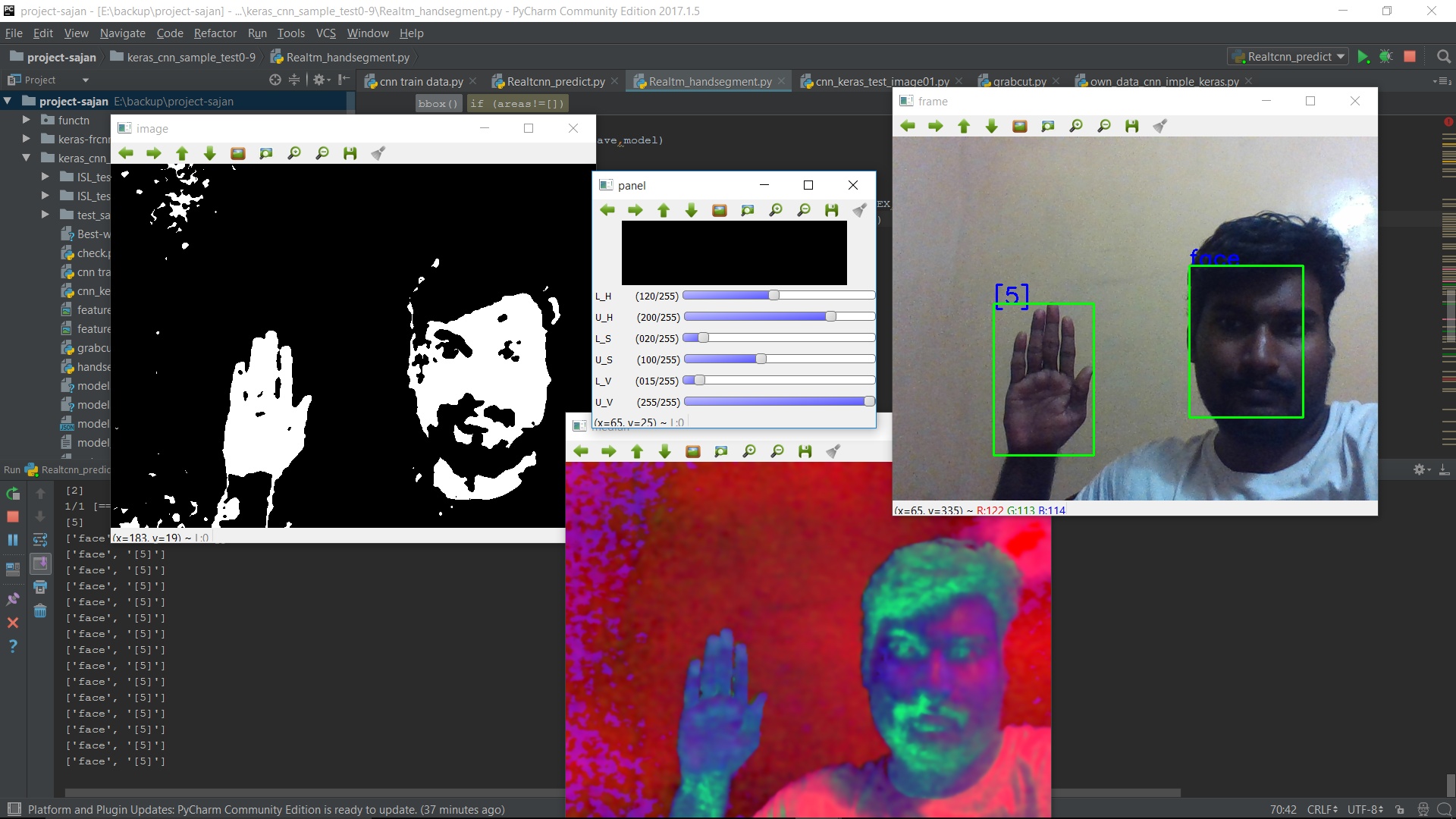

An initial threshold-based method was used for sign segmentation, but it failed under varying lighting conditions. The updated pipeline uses Tiny YOLO trained on a diverse dataset to handle different positions, backgrounds, and illumination.

Screenshots: annotation examples referenced as “Screenshot (119)” and “Screenshot (120)” in the original notes.

Real-time robustness depends heavily on dataset quality and quantity. For detection models:

Deep learning models are computationally intensive; GPU acceleration is recommended. CPU-only training is possible but significantly slower (often days). For deployment, Tiny YOLO offers practical real-time performance on modest hardware.